Key Points

- Credit card testing bots rotate through stolen payment card credentials rapidly on payment pages. The aim is to test a high volume of cards until one works to complete a fraudulent transaction.

- Unlike traditional card testing bots, AI agent based credit card testing bots can reason and use stealth browsers to avoid detection.

- Detecting fraudulent AI agents requires looking at network, browser, and especially behavioral signals in combination with page activity.

- Specialized tools (like cside or DataDome) exist to catch these bots by monitoring the browser runtime and fingerprinting signals, helping organizations stop this fraud vector earlier.

What Is AI Credit Card Testing?

AI credit card testing is the use of AI-powered automation to test whether stolen payment card credentials are valid against real merchant checkout flows. Unlike traditional carding bots that operate via raw API calls or simple HTTP requests, AI credit card testing agents use real browser sessions that execute JavaScript, interact with form elements, and mimic human checkout behavior.

A credit card testing operation typically works in stages:

- Obtain a batch of stolen card credentials (from data breaches, purchased on dark web markets, or generated algorithmically)

- Find a merchant site with a checkout flow that can validate cards with small or no charges: donation forms, trial signups, and low-minimum purchases are common targets

- Run automated sessions against the checkout, testing each credential until a successful validation occurs

- Use validated cards for higher-value fraud on other sites or sell them as "verified" in the criminal market

AI-powered credit card testers add a layer of behavioral sophistication: they vary transaction timing, simulate browsing activity before checkout, and adjust behavior based on fraud score responses to avoid triggering detection.

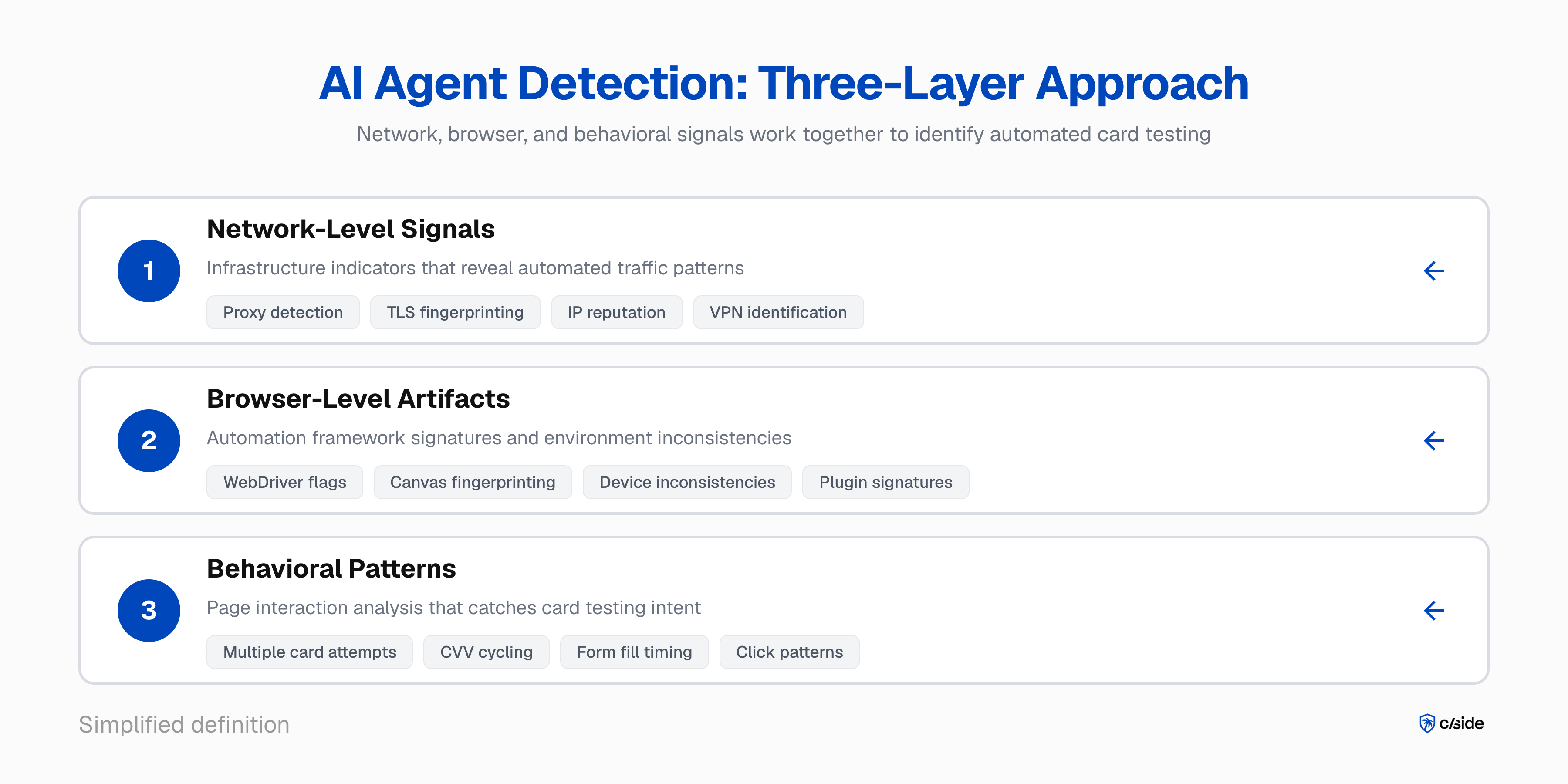

How to Detect AI Agent-Driven Credit Card Testing

Detecting this fraud vector comes down to advanced fingerprinting in addition to specialized AI agent detection measures.

Detecting AI Agents

- Network, browser, and behavioral signals to identify AI agents: The foundation of catching AI credit card testers is first identifying that a session is being driven by an AI agent at all. This means analyzing network-level indicators like proxy detection and TLS fingerprinting, browser-level artifacts from automation frameworks, and behavioral patterns in how the session interacts with the page.

We break down specific signals cside uses to identify AI agent traffic in our guide to blocking AI agents on your website.

Identifying Fraudulent Agent Behavior (Credit Card Testing)

- Combine agent detection with page activity. Once you know a session is an AI agent, the next step is identifying credit card testing intent. Form fill behavior is one of the strongest signals. Testing seven different card numbers in a short window, or cycling through CVV values ten times on the same card, is not normal human behavior. The difference with AI agents is that they can reason and don't always follow linear patterns, which makes them harder to rate limit.

When those behavioral signals are combined with other red flags, like a detected VPN or a fingerprint environment with inconsistencies, the picture becomes clear.

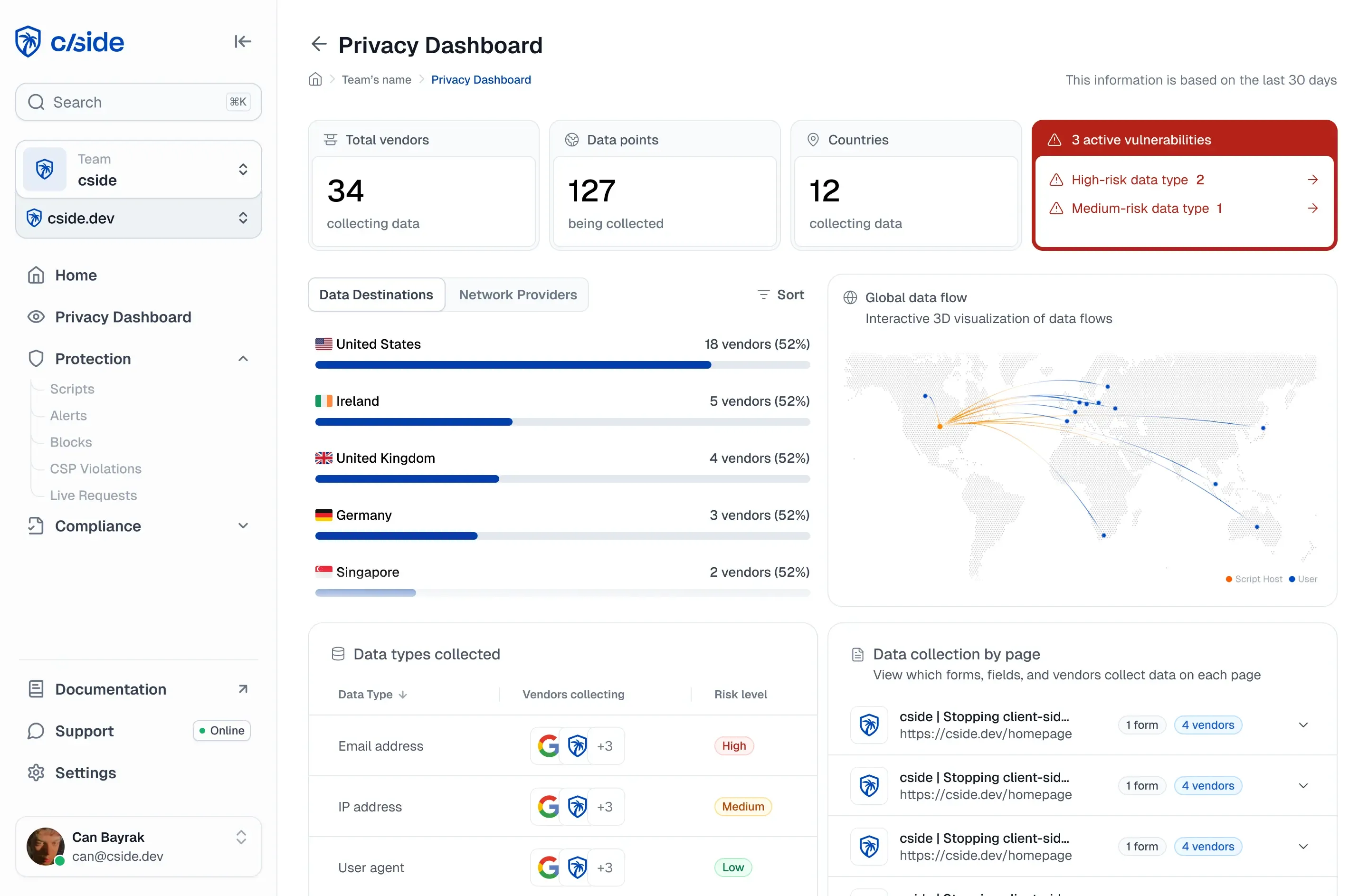

In a vendor tool, such as cside AI agent detection, a multitude of signals work together to form a risk score. This helps you identify sessions that are suspected to be testing credit cards and inform your enforcement action (block or blacklist).

Example: How an AI Credit Card Testing Attack Plays Out

Consider a mid-size e-commerce company selling consumer electronics, processing around 50,000 transactions per month with a standard checkout flow.

- Target selection. The attacker identifies the site because it has guest checkout, accepts low-value gift card purchases (starting at $5), and returns clear success/failure responses on payment attempts.

- Infrastructure setup. The attacker provisions a fleet of AI agents using a browser automation framework. Each agent runs inside a real Chromium instance with a unique residential proxy IP and a randomized device fingerprint. The attacker loads 10,000 stolen card numbers.

- The testing run. Over 48 hours, agents navigate the site, add a $5 gift card to the cart, and enter a stolen card number at checkout. Successful cards are flagged as "live." Failed attempts rotate to a new IP and fingerprint. The fleet tests roughly 200 cards per hour, spacing transactions to avoid velocity triggers.

- The damage. Of 10,000 cards tested, 600 validate successfully. Those verified cards are resold at a premium or used for high-value purchases elsewhere.

Why Traditional Detection Fails Against AI Credit Card Testers

Traditional fraud detection relies on velocity rules, IP reputation, device fingerprinting, and user-agent matching. AI credit card testing agents defeat all of them.

- Velocity rules: AI credit card testers space transactions to avoid triggering speed-based thresholds.

- IP reputation: They rotate through residential proxy networks with clean IP histories.

- Device fingerprinting: They present a fresh, realistic fingerprint per session instead of recycling the same one.

- User-agent and header inspection: They run inside real browser sessions with standard headers indistinguishable from legitimate Chrome traffic.

cside found that traditional detection tools missed AI agents in 8 out of 10 controlled tests.

The Fraud Costs of Credit Card Testing

According to Visa, enumeration attacks (the card network term for credit card testing) are responsible for $1.1 billion in annual fraud losses, and 33% of enumerated accounts experience fraud within five days of being compromised.

- Visa's Acquirer Monitoring Program (VAMP) includes a dedicated enumeration ratio. If more than 20% of a merchant's transactions are flagged as enumeration attacks, the merchant enters the program. For merchants with over 300,000 flagged enumeration attempts in a month at 20%+ of volume, Visa classifies them at the "excessive" level.

- Mastercard's Excessive Fraud Merchant (EFM) program monitors card-not-present fraud-to-sales ratios. When a merchant's ratio crosses 0.50% on CNP transactions, fines start at $500 in month two and escalate. The Excessive Chargeback Program (ECP) adds another layer: 100+ chargebacks at 150 basis points or more in a single month triggers enrollment.

A single testing run, like the e-commerce example above, can push a merchant from compliant status into a monitoring program. Getting out takes three consecutive clean months, and the fines accumulate the entire time.

Enforcement Strategies for AI Agent-Driven Credit Card Testing

Once you can identify suspected credit card testing sessions, you need an enforcement strategy that balances stopping fraud with minimizing friction for legitimate customers.

- Challenge before the payment form. Sessions with elevated risk scores should hit a verification challenge before they reach the payment fields, not after. Behavioral CAPTCHA that requires genuine human interaction adds a layer that AI agents cannot perfectly solve without revealing themselves. This is the highest-impact intervention point because it stops the testing attempt before any card data is submitted.

- Blacklist the visitor. If card testing activity is suspected on a particular device, that device can be blacklisted to prevent repeated fraud.

- Correlate and block across sessions. Credit card testing operations run many sessions from the same underlying infrastructure. Session correlation that identifies coordinated patterns, such as similar fingerprint clusters, similar behavioral timing, and similar checkout paths, catches operations that individual session analysis might miss. When a cluster is identified, enforcement can be applied to the entire operation rather than playing whack-a-mole with individual sessions.