What CTEM at the browser layer actually looks like: one third-party script, five findings

Quick answer: Continuous Threat Exposure Management (CTEM), the Gartner-defined framework for continuous exposure reduction, breaks down at the browser layer in most enterprise programs. c/side's live analysis of a single Skeepers customer-feedback widget on a major international retail bank surfaced two high and three medium findings, including a server-controlled sub-script missing from the Content Security Policy and a 360-day tracking cookie scoped to the bank's authentication subdomain, despite zero prior threat-database hits. This article maps each finding to the five CTEM stages and shows why PCI DSS 4.0.1 clauses 6.4.3 and 11.6.1 demand more than a script inventory.

A major international retail bank loads a customer-feedback widget on its main site. The widget sets a 360-day tracking cookie scoped to the entire bank domain, including the authentication subdomain. It dynamically injects a sub-script whose filename is decided by the vendor's server at runtime, and that sub-script is not in the bank's own Content Security Policy. The login pages have no CSP at all.

None of that is hypothetical. It is what c/side found in a deep analysis of one Skeepers / MyFeelBack script running on a live banking property.

This article uses that real breakdown (anonymized, with vendor names retained) to show what Continuous Threat Exposure Management (CTEM) looks like when it is actually applied to the browser layer, and where most programs leave the loop open.

TL;DR

- CTEM (Gartner's framework for treating exposure as a continuous loop) has five stages: scoping, discovery, prioritization, validation, mobilization. Most programs cover the first two and stop at the browser.

- A live analysis of a single Skeepers widget on an international bank surfaced two HIGH and three MEDIUM findings, including a server-controlled sub-script not in the CSP allowlist.

- The bank's authentication pages had no CSP header at all, in apparent conflict with PCI DSS 4.0.1 clause 6.4.3.

- A 360-day tracking cookie was scoped to the whole banking domain, riding along with login flows.

- The median third-party inclusion chain on the modern web is three levels deep, with a maximum observed depth of 2,285 (Web Almanac 2025).

- Magecart and skimmer activity compromised over 23 million online transactions in 2025 (Recorded Future via Mastercard).

- Visibility without enforcement is documentation, not protection.

What is CTEM, and where does it usually break?

CTEM is Gartner's framework for treating exposure as a continuous loop across five stages: scoping, discovery, prioritization, validation, and mobilization. The point of the loop is that exposure changes constantly, so the response must be continuous as well.

Most security programs have made real progress on the first three stages for traditional assets. Cloud configurations get scanned. Identity sprawl gets mapped. Vulnerabilities get scored.

Then the loop breaks at validation and mobilization for anything that runs in the browser.

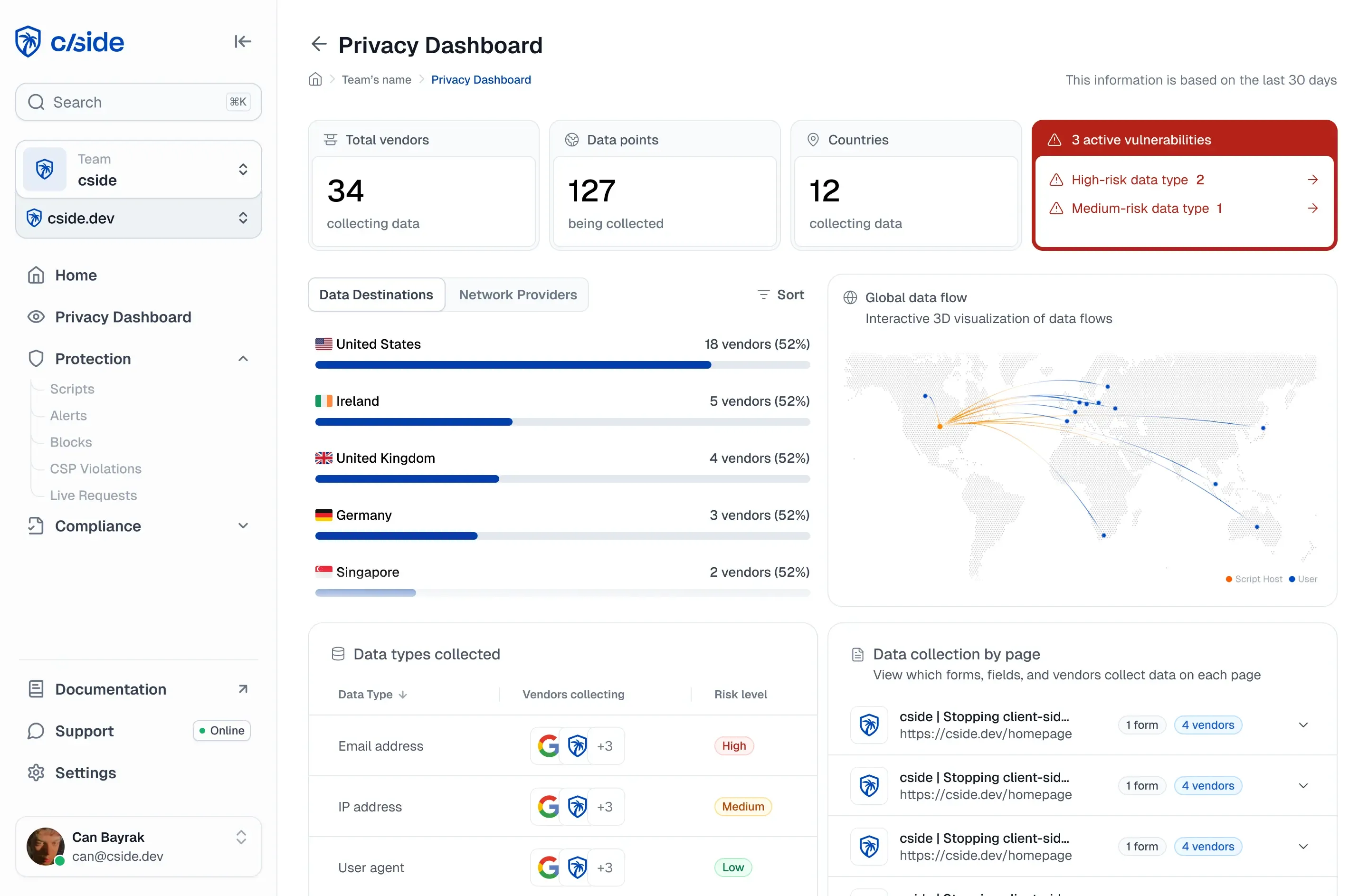

Browser CTEM vs cloud CTEM

| CTEM stage | Cloud and identity | Browser layer |

|---|---|---|

| Scoping | Mature | Often skipped |

| Discovery | Strong tooling | Partial, mostly via tag managers |

| Prioritization | Risk-scored | Rarely scored at all |

| Validation | Pen tests, BAS | Almost never tested |

| Mobilization | Patch, isolate, revoke | Email the marketing team |

Browser exposure does not get mobilized because the security team usually cannot mobilize it. They can see a script. They can write a ticket. They cannot stop the script from executing on the next page load.

That is the gap. The walk-through below is what the gap looks like in production.

Case study: one script, five CTEM-relevant findings

The script in question is the primary loader for the Skeepers (formerly MyFeelBack) customer-feedback widget. It is hosted at cdnactor.myfeelback.com and loaded on the general pages of an international retail bank's main site.

It is not malicious. There were no YARA hits, no entries in any threat database, no static-analysis red flags. That is the point. Most browser exposure does not come from obvious bad actors. It comes from approved, contractually-procured third parties whose behavior nobody is actively governing.

Finding 1: The CSP allowlist is incomplete

The bank's general pages ship a Content Security Policy that allowlists *.myfeelback.com. So far, normal.

But the Skeepers loader injects a sub-script at runtime from room.cxr.skeepers.io, plus stylesheets from actor.cxr.skeepers.io, plus a GeoIP call to an AWS Lambda endpoint at c7hn3ry3r4.execute-api.us-east-1.amazonaws.com. None of those domains are in the CSP. The widget is therefore either partly broken in production, or the CSP is being bypassed in ways the security team did not authorize.

This is the kind of mismatch a one-time vendor review never catches. The script ships an *.myfeelback.com URL on day one. The runtime behavior pulls from *.cxr.skeepers.io six months later, after a corporate rebrand. CTEM-grade discovery has to follow the actual runtime calls, not the contract.

Finding 2: A server-controlled sub-script with a moving hash

The loader builds a URL at runtime that looks like:

https://room.cxr.skeepers.io/lib/frontend/handy/js/libraries/{themeDeployment}-libraries.js

The {themeDeployment} part is decided by the vendor's server. The hash of the loader stays stable. The hash of the thing it pulls in changes whenever Skeepers wants. Static review of the loader will pass on Monday and miss a sub-script swap on Tuesday.

This is the classic browser supply-chain pattern. Polyfill.io worked the same way in 2024: a clean primary script, a mutable second-stage payload, no integrity check from the host page. Once the second stage flips, every visitor to every site loading the primary script becomes a target.

CTEM validation in this context means continuously fingerprinting the runtime-injected code, not the loader.

Finding 3: A 360-day tracking cookie scoped to the apex domain

The widget sets a cookie called _MFB_ with these properties:

- Domain: the entire bank apex (everything, including the authentication subdomain)

- Expiry: 360 days

- Contents: a Base64-encoded blob containing a persistent 11-character random visitor ID, campaign and deployment visit counters, page-visit history, click counters, session TTL, and CSS selectors

In parallel, the widget writes 15 keys into localStorage and synchronizes them back to the cookie on every page load.

A few things follow from this:

- The apex-level scope means the cookie travels with requests to the authentication subdomain, even though the widget itself is not loaded there.

- A 360-day pseudonymous identifier on a banking property needs explicit GDPR / CNIL consent and a documented retention justification. That is a Record of Processing Activities (ROPA) question, not just a security one.

- The cross-storage sync (cookie ↔ localStorage) means standard cookie clearing does not reset the visitor ID.

This is exactly the kind of long-tail privacy exposure CTEM frameworks are supposed to surface during prioritization.

Finding 4: No CSP on the authentication pages at all

This is the most serious finding. The bank's login flow ships no Content Security Policy header.

The Skeepers script is not loaded there. But that is not the point. The point is that any future script (a marketing tag, an A/B testing tool, a compromised vendor) would execute on the login flow with no restriction and full DOM access to the credential fields.

PCI DSS 4.0.1 clause 6.4.3 requires a mechanism to confirm and authorize scripts on payment pages. Clause 11.6.1 requires a tamper-detection mechanism on those pages. Neither is satisfied by the absence of any header at all.

The infrastructure exists. A CSP reporting endpoint already runs for the general site. It just has not been extended to the most sensitive pages on the property.

Finding 5: The survey renders inside the host DOM

When a survey triggers, the widget injects #mfbIframeOverlay and #mfbIframeBlock directly into document.body. That sounds benign, and it is, for the survey itself. But it means the dynamically loaded sub-script (the one with the moving hash) executes in the same origin as the bank's page. Same DOM access, same form access, same cookie access.

A keydown listener is attached for accessibility (Tab-key focus trap). It is not exfiltrating anything today. But the listener is on the top-level survey container, and the code that owns it can change at any time the vendor pushes a new theme deployment. CTEM prioritization should flag this as a "trust the vendor's release process" dependency.

What the analysis took, and what it found

For context on the CTEM discovery and validation effort itself:

| Metric | Value |

|---|---|

| Scripts analyzed | 2 (primary loader + sub-script) |

| Tools called | 20+ across script, infra, threat, behavior |

| Total runtime | 281.9 seconds |

| Risk findings | 8 (2 HIGH, 3 MEDIUM, 3 INFO) |

| Existing threat-DB hits | 0 |

| YARA matches | 0 |

Zero existing threat indicators. Two HIGH findings. That is the asymmetry CTEM was designed to catch: the most common browser exposure is not a known-bad script. It is an approved script doing things that were never explicitly authorized.

Why crawlers and traditional scanners cannot find any of this

A reasonable question at this point is: why did none of the bank's existing tooling surface these findings? The answer is that almost nothing in the standard security stack actually executes the page the way a real user does.

Consider what the typical scanner sees:

- Web application scanners (DAST) crawl URLs and inspect responses. They do not load the page in a real browser, do not run the JavaScript, and do not wait for runtime-injected scripts to fetch their second-stage payloads. Skeepers' loader returns 200 OK with valid JS. Done.

- Search-engine and SEO crawlers render with a headless browser, but they do not score third-party scripts for security behavior. They care about content, not cookies.

- Static analysis (SAST) and SCA look at code in your repo. The Skeepers script is not in your repo. It is loaded from someone else's CDN at runtime.

- CSP report-only mode can detect violations, but only for the domains you already know to allowlist. It cannot warn you about runtime-injected sub-scripts whose URLs are decided server-side after the page loads.

- Vulnerability scanners match CVEs against software inventories. There is no CVE for "your vendor's marketing widget sets a 360-day cookie on your auth subdomain."

- Penetration tests are point-in-time. The Skeepers loader's hash had zero observation history in the 90-day window. The sub-script's hash changes whenever the vendor pushes a new theme. A pentest in March cannot tell you what is loading in October.

The bank's site has CSP infrastructure, a CSP reporting endpoint, and a security team that knows what it is doing. None of that caught any of these findings, because none of those tools watch the runtime browser environment continuously.

This is the structural reason the browser stays in the CTEM blind spot. The exposure is not in code anyone scans. It is in code that loads, mutates, and executes on the user's machine, in a context that ends when the tab closes. Catching it requires watching the runtime, not the repo or the network.

Why visibility-only tools do not close the loop

The Skeepers walk-through is the kind of output a visibility-only tool can produce. The hard part is what comes after.

| Approach | Discovers scripts | Scores risk | Detects change | Stops execution |

|---|---|---|---|---|

| Tag managers | Yes | No | Partial | No |

| CSP only | No | No | Partial | Yes, but blunt |

| Visibility-first browser security | Yes | Yes | Yes | No |

| Enforcement-first browser security | Yes | Yes | Yes | Yes |

Without the last column, the security team's only mobilization path is a ticket to whichever team owns the vendor relationship, usually marketing or CX. That ticket competes with feature work. The vendor pushes a new sub-script before the ticket is closed. The cycle repeats.

This is the operational reason CTEM stalls at the browser, even at well-resourced enterprises.

Why the wider numbers should worry security leaders

The Skeepers walk-through is one script on one site. The aggregate picture is much larger.

- The median third-party inclusion chain on the modern web is 3 levels deep, with a maximum observed depth of 2,285 (Web Almanac 2025).

- Skimmer and Magecart-style attacks compromised more than 23 million online transactions in 2025 (Recorded Future via Mastercard).

- Most CTEM tooling treats this layer as someone else's problem.

A bank loading two Skeepers scripts is a small case. An e-commerce checkout loading 80 to 200 third-party scripts is normal. Exposure scales with script count, inclusion-chain depth, and the cadence at which vendors push changes.

Mapping cside to the CTEM loop, using this case

This is what each CTEM stage looks like for this single script when the cside platform is the runtime control layer.

| CTEM stage | What it means in the browser | Skeepers example |

|---|---|---|

| Scoping | Define which sites and pages are in scope | All bank subdomains, with extra rigor on the auth flow |

| Discovery | Inventory all first, third, and fourth-party scripts | Loader + runtime sub-script + GeoIP Lambda + 3 CSS endpoints |

| Prioritization | Score scripts by data access and behavior | 360-day cookie on auth subdomain, server-controlled sub-script: HIGH |

| Validation | Test what scripts actually do, not what they claim | Confirm CSP gap, confirm cookie scope, fingerprint the sub-script |

| Mobilization | Stop, restrict, or allow execution | Restrict the widget to non-auth pages, hash-pin the sub-script, alert on change |

The screenshots most teams send back say the same thing once cside is deployed. They knew the third-party landscape was big. They did not know it was 16,000 scripts in a typical retailer's week. They did not know how many of those needed PCI review. They did not have a fast path from "we found a problem" to "the problem cannot run."

For e-commerce specifically, this is the line of defense against Magecart and web-skimming protection campaigns, which keep working precisely because the script keeps running.

What to ask of a browser exposure tool

If you are evaluating tools and want to keep the CTEM frame, the questions are concrete:

- Does it discover scripts that load at runtime, not just at initial page load?

- Does it follow the dependency chain to fourth-party loads?

- Does it detect when a previously-clean script's hash changes?

- Does it tell you what each script reads from the DOM, cookies, and storage?

- Can it block or restrict a script in production without redeploying the site?

- Does its evidence map to PCI DSS 6.4.3 and 11.6.1?

- How long is the path from detection to enforcement? Minutes, hours, or a sprint?

If the answer to the last three is anything other than "yes, fast, yes," the tool is doing CTEM discovery without CTEM mobilization.

The framing that matters

CTEM works because it treats exposure as continuous. The browser only fits that frame if the response is continuous too. Quarterly script audits do not match the cadence at which third-party code changes. Tickets to the marketing team do not match the speed of a Magecart skimmer.

The Skeepers case is mild. No malicious actor, a reputable vendor, a well-known SaaS pattern. It still produced two HIGH findings on a major international bank, including one that maps directly to PCI DSS 4.0.1 6.4.3.

If a benign vendor on a careful customer produces this much exposure, the question is not whether the browser layer needs CTEM coverage. The question is how long enterprises will keep treating it as out of scope.

To see what your own exposure looks like under the same lens, browser script monitoring on a live property gives you the discovery, prioritization, and enforcement loop in under an hour.

If you are also weighing a visibility-led platform against an enforcement-led one, the Reflectiz vs cside comparison walks through the trade-offs side by side.